We also examined numerical methods such as the Runge-Kutta methods, that are used to solve initial-value problems for ordinary di erential equations. However these problems only focused on solving nonlinear equations with only one variable, rather than nonlinear equations with several variables. The goal of this paper is to examine.

In computational mathematics, an iterative method is a mathematical procedure that uses an initial guess to generate a sequence of improving approximate solutions for a class of problems, in which the n-th approximation is derived from the previous ones. A specific implementation of an iterative method, including the termination criteria, is an algorithm of the iterative method. An iterative method is called convergent if the corresponding sequence converges for given initial approximations. A mathematically rigorous convergence analysis of an iterative method is usually performed; however, heuristic-based iterative methods are also common.

In contrast, direct methods attempt to solve the problem by a finite sequence of operations. In the absence of rounding errors, direct methods would deliver an exact solution (like solving a linear system of equations by Gaussian elimination). Iterative methods are often the only choice for nonlinear equations. However, iterative methods are often useful even for linear problems involving many variables (sometimes of the order of millions), where direct methods would be prohibitively expensive (and in some cases impossible) even with the best available computing power.[1]

- 2Linear systems

- 2.1Stationary iterative methods

- 2.2Krylov subspace methods

Attractive fixed points[edit]

If an equation can be put into the form f(x) = x, and a solution x is an attractive fixed point of the function f, then one may begin with a point x1 in the basin of attraction of x, and let xn+1 = f(xn) for n ≥ 1, and the sequence {xn}n ≥ 1 will converge to the solution x. Here xn is the nth approximation or iteration of x and xn+1 is the next or n + 1 iteration of x. Alternately, superscripts in parentheses are often used in numerical methods, so as not to interfere with subscripts with other meanings. (For example, x(n+1) = f(x(n)).) If the function f is continuously differentiable, a sufficient condition for convergence is that the spectral radius of the derivative is strictly bounded by one in a neighborhood of the fixed point. If this condition holds at the fixed point, then a sufficiently small neighborhood (basin of attraction) must exist.

Linear systems[edit]

In the case of a system of linear equations, the two main classes of iterative methods are the stationary iterative methods, and the more general Krylov subspace methods.

Stationary iterative methods[edit]

Introduction[edit]

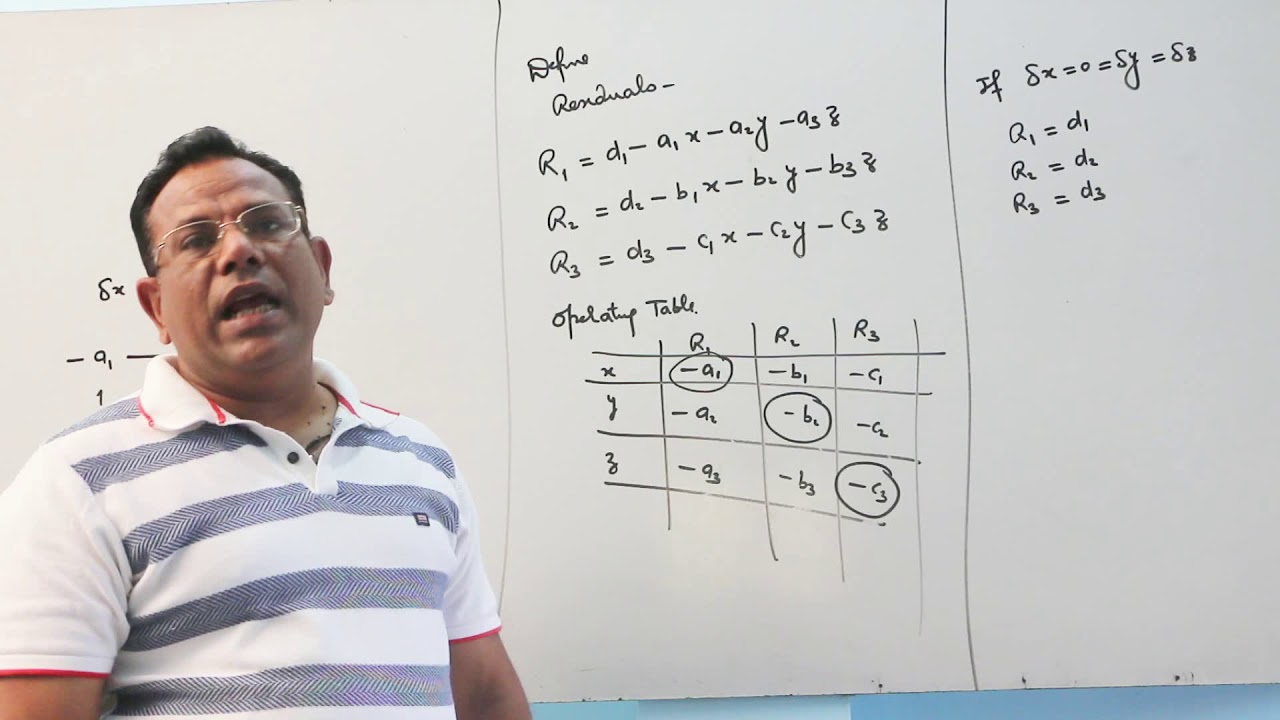

Stationary iterative methods solve a linear system with an operator approximating the original one; and based on a measurement of the error in the result (the residual), form a 'correction equation' for which this process is repeated. While these methods are simple to derive, implement, and analyze, convergence is only guaranteed for a limited class of matrices.

Definition[edit]

An iterative method is defined by

and for a given linear system with exact solution the error by

An iterative method is called linear if there exists a matrix such that

and this matrix is called iteration matrix.An iterative method with a given iteration matrix is called convergent if the following holds

An important theorem states that for a given iterative method and its iteration matrix it is convergent if and only if its spectral radius is smaller than unity, that is,

The basic iterative methods work by splitting the matrix into

and here the matrix should be easily invertible.The iterative methods are now defined as

From this follows that the iteration matrix is given by

Examples[edit]

Basic examples of stationary iterative methods use a splitting of the matrix such as

where is only the diagonal part of , and is the strict lower triangular part of .Respectively, is the upper triangular part of .

- Richardson method:

- Jacobi method:

- Damped Jacobi method:

- Gauss–Seidel method:

- Successive over-relaxation method (SOR):

- Symmetric successive over-relaxation (SSOR):

Linear stationary iterative methods are also called relaxation methods.

Krylov subspace methods[edit]

Krylov subspace methods work by forming a basis of the sequence of successive matrix powers times the initial residual (the Krylov sequence). The approximations to the solution are then formed by minimizing the residual over the subspace formed. The prototypical method in this class is the conjugate gradient method (CG) which assumes that the system matrix is symmetricpositive-definite.For symmetric (and possibly indefinite) one works with the minimal residual method (MINRES).In the case of not even symmetric matrices methods, such as the generalized minimal residual method (GMRES) and the biconjugate gradient method (BiCG), have been derived.

Convergence of Krylov subspace methods[edit]

Since these methods form a basis, it is evident that the method converges in N iterations, where N is the system size. However, in the presence of rounding errors this statement does not hold; moreover, in practice N can be very large, and the iterative process reaches sufficient accuracy already far earlier. The analysis of these methods is hard, depending on a complicated function of the spectrum of the operator.

Preconditioners[edit]

The approximating operator that appears in stationary iterative methods can also be incorporated in Krylov subspace methods such as GMRES (alternatively, preconditioned Krylov methods can be considered as accelerations of stationary iterative methods), where they become transformations of the original operator to a presumably better conditioned one. The construction of preconditioners is a large research area.

History[edit]

Probably the first iterative method for solving a linear system appeared in a letter of Gauss to a student of his. He proposed solving a 4-by-4 system of equations by repeatedly solving the component in which the residual was the largest.

The theory of stationary iterative methods was solidly established with the work of D.M. Young starting in the 1950s. The Conjugate Gradient method was also invented in the 1950s, with independent developments by Cornelius Lanczos, Magnus Hestenes and Eduard Stiefel, but its nature and applicability were misunderstood at the time. Only in the 1970s was it realized that conjugacy based methods work very well for partial differential equations, especially the elliptic type.

See also[edit]

References[edit]

- ^Amritkar, Amit; de Sturler, Eric; Świrydowicz, Katarzyna; Tafti, Danesh; Ahuja, Kapil (2015). 'Recycling Krylov subspaces for CFD applications and a new hybrid recycling solver'. Journal of Computational Physics. 303: 222. arXiv:1501.03358. Bibcode:2015JCoPh.303..222A. doi:10.1016/j.jcp.2015.09.040.

External links[edit]

| Wikimedia Commons has media related to Iterative methods. |

Retrieved from 'https://en.wikipedia.org/w/index.php?title=Iterative_method&oldid=914430210'

< Numerical Methods

- 3Exact Solution of Linear Systems

- 4Approximate Solution of Linear Systems

Definitions and Basics[edit]

A linear equation system is a set of linear equations to be solved simultanously. A linear equation takes the form

where the coefficients and are constants and are the n unknowns. Following the notation above, a system of linear equations is denoted as

This system consists of linear equations, each with coefficients, and has unknowns which have to fulfill the set of equations simultanously. To simplify notation, it is possible to rewrite the above equations in matrix notation:

The elements of the matrix are the coefficients of the equations, and the vectors and have the elements and respectively. In this notation each line forms a linear equation.

Over- and Under-Determined Systems[edit]

In order for a solution to be unique, there must be at least as many equations as unknowns. In terms of matrix notation this means that . However, if a system contains more equations than unknowns () it is very likely (not to say the rule) that there exists no solution at all. Such systems are called over-determined since they have more equations than unknowns. They require special mathematical methods to solve approximately. The most common one is the Least-Squares-Method which aims at minimizing the sum of the error-squares made in each unknown when trying to solve a system. Such problems commonly occur in measurement or data fitting processes.

example

Assume an arbitrary triangle: suppose one measures all three inner angles with a precission of . Furthermore assume that the lengths of the sides a, b and c are known exactly. From trigonometry it is known, that using the law of cosines one can compute the angle or the length of a side if all the other sides and angles are known. But as is known from geometry, the inner angles of a planar triangle always must add up to . So we have three laws of cosines and the rule for the sum of angles. Makes a total of four equations and three unknowns which gives an over-determined problem.

Assume an arbitrary triangle: suppose one measures all three inner angles with a precission of . Furthermore assume that the lengths of the sides a, b and c are known exactly. From trigonometry it is known, that using the law of cosines one can compute the angle or the length of a side if all the other sides and angles are known. But as is known from geometry, the inner angles of a planar triangle always must add up to . So we have three laws of cosines and the rule for the sum of angles. Makes a total of four equations and three unknowns which gives an over-determined problem.

On the other hand, if the problem arises, that the solution is not unique, as one unknown can be freely chosen. Again, mathematical methods exist to treat such problems. However, they will not be covered in this text.

This chapter will mainly concentrate on the case where and assumes so unless mentioned otherwise.

Exact Solution of Linear Systems[edit]

Solving a system in terms of linear algebra is easy: just multiply the system with from the left, resulting in . However, finding is (except for trivial cases) very hard. The following sections describe methods to find an exact (up to rounding-errors) solution to the problem.

Diagonal and Triangular Systems[edit]

A diagonal matrix has only entries in the main diagonal:

The inverse of in such a case is simply a diagonal matrix with inverse entries, meaning

It follows, that a diagonal system has the solution which is very easy to compute.

An upper triangular system is defined as

and a lower triangular system as

Backward-substitution is the process of solving an upper triangular system

Backward-substitution on the other hand is the same procedure for lower triangular systems

Gauss-Jordan Elimination[edit]

Instead of finding this method relies on row-operations. According to the laws of linear algebra, the rows of an equation system can be multiplied by a constant without changing the solution. Additionaly the rows can be added and subtracted from one another. This leads to the idea of changing the system in such a way that has a structure which allows for easy solving for . One such structure would be a diagonal matrix as mentioned above.

Gauss-Jordan Elimination brings the matrix into diagonal form. To simplify the procedure, one often uses an adapted scheme. First, the matrix and the right-hand vector are combined into the augmented matrix

To illustrate, consider an easy to understand, yet efficient algorithm can be built from four basic components:

- gelim: the main function iterates through a stack of reduced equations building up the complete solution one variable at a time, through a series of partial solutions.

- stack: calls reduce repeatedly, producing a stack of reduced equations, ordered from smallest (2 elements, such as <ax = b>) to largest.

- solve: solves for one variable, given a reduced equation and a partial solution. For example given the reduced equation <aw bx cy = d> and the partial solution <x y>, w = (d - bx - cy)/a. Now the partial solution <w x y> is available for the next round, e.g. <au bv cw dx , e>.

- reduce: takes the first equation off the top and pushes it onto the stack; then produces a residual - a reduced matrix, by subtracting the elements of the original, first equation from corresponding elements of the remaining, lower equations, e.g. b[j][k]/b[j][0] - a[k]/a[0]. As you can see, this eliminates the first element in each of the lower equations by subtracting one from one, and only the remaining elements need be kept - ultimately, the residual is an output matrix with one less row, and one less column than the input matrix. It is then used as the input for the next iteration.

It should be noted that multiplication could also be used in place of division; however, on larger matrices (e.g. n=10), this has a cascading effect producing NAN's (infinities). The division, looked at statistically, has the effect of normalizing the reduced matrices - producing numbers with a mean closer to zero and a smaller standard deviation; for randomly generated data, this produces reduced matrices with entries in the vicinity of +-1.

Continuation still to be written

As it is, it shows that it isn't necessary to bring a system into full diagonal form. It is sufficient to bring it into triangular (either upper or lower) form, since it can then be solved by backward or forward substitution respectively.

LU-Factorization[edit]

- This section needs to be written

Approximate Solution of Linear Systems[edit]

- This section needs to be written

Jacobi Method[edit]

It is an iterative scheme.

Gauss-Seidel Method[edit]

- This section needs to be written

SOR Algorithm[edit]

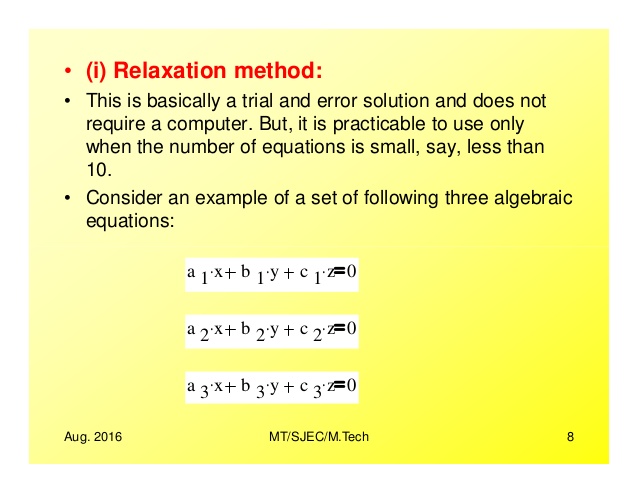

SOR is an abbreviation for the Successive Over Relaxation. It is an iterative scheme that uses a relaxation parameter and is a generalization of the Gauss-Seidel method in the special case .

Given a square system of n linear equations with unknown x:

where:

Then A can be decomposed into a diagonal component D, and strictly lower and upper triangular components L and U:

where

The system of linear equations may be rewritten as:

for a constant ω > 1.

The method of successive over-relaxation is an iterative technique that solves the left hand side of this expression for x, using previous value for x on the right hand side. Analytically, this may be written as:

However, by taking advantage of the triangular form of (D+ωL), the elements of x(k+1) can be computed sequentially using forward substitution:

The choice of relaxation factor is not necessarily easy, and depends upon the properties of the coefficient matrix. For symmetric, positive-definitematrices it can be proven that 0 < ω < 2 will lead to convergence, but we are generally interested in faster convergence rather than just convergence.

Conjugate Gradients[edit]

- This section needs to be written

Multigrid Methods[edit]

- This section needs to be written

Main Page - Mathematics bookshelf - Numerical Methods

Retrieved from 'https://en.wikibooks.org/w/index.php?title=Numerical_Methods/Solution_of_Linear_Equation_Systems&oldid=3529991'